AI That Works With Your Existing Systems

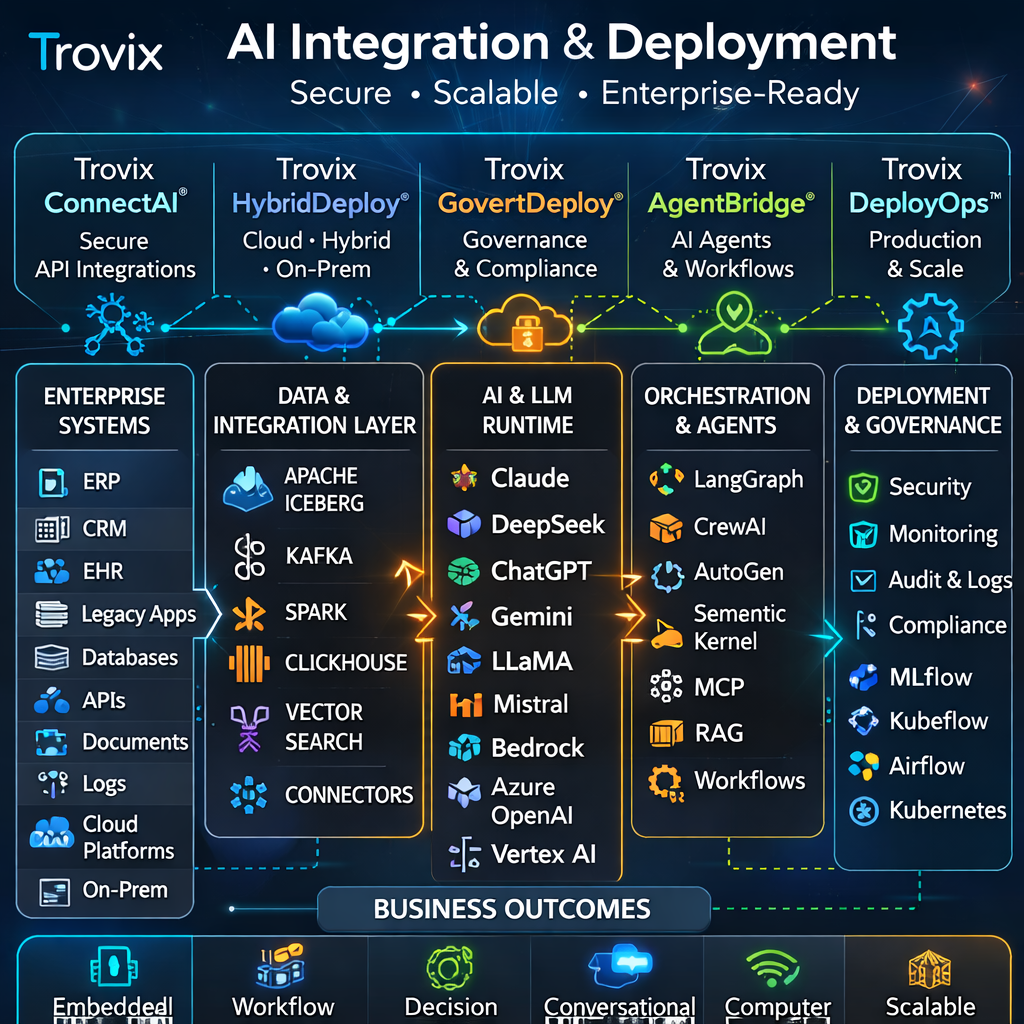

Trovix integrates Artificial Intelligence (AI), Machine Learning (ML), generative AI, Large Language Models (LLMs), AI agents, and intelligent automation into legacy and modern enterprise environments without disrupting operations. We help organisations deploy AI where it creates value most quickly: inside existing applications, operational workflows, analytics environments, service platforms, and decision support systems.

Our integration and deployment approach combines secure API-first engineering, hybrid cloud architecture, containerised deployment, model serving, enterprise connectors, governance controls, MLOps, LLMOps, AgentOps, and production monitoring so that AI systems can scale reliably across real business environments.

We work with modern AI ecosystems including Claude, DeepSeek, ChatGPT, Gemini, LLaMA, Mistral, Amazon Bedrock, Azure OpenAI, Google Vertex AI, NVIDIA AI infrastructure, Apache Iceberg, ClickHouse, Kafka, Spark, MLflow, Kubeflow, Airflow, LangGraph, CrewAI, AutoGen, Semantic Kernel, LlamaIndex, MCP-compatible integrations, vector search, embeddings, RAG pipelines, Kubernetes, serverless APIs, and inference services.

For clients, this means AI that can be integrated into existing ERP, CRM, EHR, service management, support, operations, analytics, and line-of-business systems with strong security, compliance, observability, and performance.

Trovix AI Integration & Deployment Solutions

Trovix ConnectAI© – Secure Enterprise AI Integration Platform

Trovix ConnectAI© enables organisations to integrate AI services, ML models, LLM assistants, and intelligent automation into existing enterprise systems through secure APIs and governed service layers.

What it does for clients:

- Connects AI capabilities to ERP, CRM, EHR, ticketing, analytics, data, and workflow platforms

- Supports secure API-based integrations between models, enterprise applications, and internal tools

- Enables AI features to be embedded into portals, dashboards, workflows, and customer-facing systems

- Reduces integration friction between modern AI services and legacy environments

Technology stack:

- API gateways, service meshes, secure REST/GraphQL integrations, and event-driven architectures

- MCP-compatible integrations, tool calling, and enterprise action controls

- Cloud-native deployment across AWS, Azure, and Google Cloud

- Support for Bedrock, Azure OpenAI, Vertex AI, and multi-model LLM connectivity

Client benefits:

- Faster rollout of AI features into existing business systems

- Less disruption to current operations and application estates

- More secure and maintainable AI integration patterns

- Clearer path from experimentation to production adoption

Trovix HybridDeploy© – Cloud, Hybrid & On-Prem AI Deployment Platform

Trovix HybridDeploy© provides flexible deployment patterns for AI, ML, LLM, and agentic systems across cloud, hybrid, and on-premise environments.

What it does for clients:

- Deploys AI workloads across public cloud, private cloud, data centre, and hybrid infrastructure

- Supports regulated, latency-sensitive, and security-driven deployment requirements

- Enables containerised deployment, model serving, and inference management at scale

- Provides a consistent architecture for multi-environment AI delivery

Technology stack:

- Kubernetes, container orchestration, inference services, and service-based deployment models

- AWS, Azure, GCP, hybrid networking, and enterprise-grade runtime controls

- NVIDIA GPU-backed training and inference deployment for advanced workloads

- Support for private LLM deployments, managed cloud models, and hybrid data access patterns

Client benefits:

- Greater deployment flexibility across enterprise environments

- Stronger alignment with security, latency, and compliance requirements

- Reduced dependency on a single cloud or architecture pattern

- Scalable infrastructure for long-term AI adoption

Trovix GovernDeploy© – Compliant AI Rollout & Runtime Governance Layer

Trovix GovernDeploy© ensures that AI integrations are deployed with governance, observability, compliance controls, and enterprise runtime safeguards.

What it does for clients:

- Applies governance, access control, and policy enforcement across deployed AI services

- Supports auditability, traceability, and monitoring of model and agent behaviour

- Provides secure rollout controls for production AI systems and LLM applications

- Enables safe expansion of AI use cases across business-critical workflows

Technology stack:

- MLflow, Kubeflow, Airflow, observability tooling, and deployment governance workflows

- Prompt controls, model versioning, inference monitoring, and LLMOps evaluation pipelines

- Security controls for Claude, DeepSeek, ChatGPT, Gemini, LLaMA, Bedrock, and Azure OpenAI based systems

- Encryption, logging, audit trails, and enterprise policy enforcement

Client benefits:

- Safer production rollout of AI systems

- Stronger governance and compliance readiness

- Better visibility into AI performance and usage

- Lower operational and security risk during scale-up

Trovix AgentBridge© – Agent Integration & Workflow Activation Layer

Trovix AgentBridge© connects AI agents, copilots, and intelligent automation services into enterprise workflows so they can do useful work beyond simple chat interactions.

What it does for clients:

- Integrates AI agents with enterprise tools, APIs, business processes, and operational workflows

- Supports task execution, decision support, orchestration, and workflow activation

- Combines human-in-the-loop oversight with agent-driven automation

- Turns LLMs and copilots into practical workflow participants

Technology stack:

- LangGraph, CrewAI, AutoGen, Semantic Kernel, and LlamaIndex orchestration frameworks

- MCP-style tool integration, API connectors, and enterprise action routing

- LLM backends including Claude, DeepSeek, ChatGPT, Gemini, Mistral, and Bedrock-hosted models

- Event-driven workflow triggers and secure runtime execution layers

Client benefits:

- More useful AI automation across real workflows

- Reduced manual execution across repetitive or multi-step tasks

- Improved operational throughput and coordination

- Practical deployment path for agentic AI inside the enterprise

Trovix DeployOps© – Production AI Delivery, Monitoring & Scalability Platform

Trovix DeployOps© provides the engineering and operational controls required to move AI from prototype into stable production use across enterprise systems.

What it does for clients:

- Implements deployment pipelines for AI models, LLM apps, RAG systems, and agentic services

- Supports rollback, versioning, monitoring, observability, and performance management

- Enables scale-out architecture for growing AI workloads and user demand

- Provides operational guardrails for reliable enterprise adoption

Technology stack:

- CI/CD pipelines, Kubernetes, inference services, autoscaling, and API-first delivery

- ClickHouse, telemetry pipelines, and runtime observability for AI operations

- Apache Iceberg, Kafka, and Spark for integration with enterprise data environments

- Cloud and hybrid deployment models with scalable compute and GPU-backed services

Client benefits:

- Faster, more reliable production rollout of AI solutions

- Better scalability as adoption grows

- Stronger operational resilience and visibility

- Reduced risk of fragile, one-off AI deployments

What We Deliver

- Secure API-based integrations: AI services, LLMs, agents, and predictive models connected to enterprise applications through governed APIs and service layers

- Cloud, hybrid, and on-prem deployments: Flexible runtime models for public cloud, private cloud, data centre, and regulated enterprise environments

- Governance, compliance, and scalability: Controls for access, monitoring, auditability, performance, resilience, and long-term AI growth

- Legacy and modern system interoperability: Integration patterns that work across existing systems without forcing disruptive re-platforming

- Agent and workflow integration: Deployment of AI copilots and agents into real business processes and operational workflows

AI Integration & Deployment Architecture Flow

Trovix AI integration and deployment platforms connect enterprise systems, models, APIs, runtime controls, and workflows into a secure production-ready architecture.

Enterprise Systems & Data Environments

ERP / CRM / EHR / Legacy Apps / APIs / Data Platforms / Documents / Logs / Cloud Services / On-Prem Systems

↓

Integration & Data Connectivity Layer

API Gateways / Secure Connectors / Apache Iceberg / Kafka / Spark / ClickHouse / Cloud Storage / Event Streams

↓

AI, LLM & Agent Runtime Layer

Claude / DeepSeek / ChatGPT / Gemini / LLaMA / Mistral / Bedrock / Azure OpenAI / Vertex AI / RAG / MCP / LangGraph / CrewAI / Inference Services

↓

Deployment, Governance & Scale Layer

Kubernetes / MLflow / Kubeflow / Airflow / Security Controls / Monitoring / Auditability / Policy Enforcement / Autoscaling

↓

Business Integration Outcomes

Embedded AI Features / Workflow Automation / Decision Support / Enterprise Assistants / Scalable Rollout / Secure Production Adoption

Business Outcomes

Trovix helps clients deploy AI into existing enterprise estates in a way that is secure, scalable, and commercially useful, without requiring disruptive replacement of current systems. The live page currently presents this only at a high level; this expanded version makes the offer much clearer for buyers. :contentReference[oaicite:1]{index=1}

- Faster integration of AI into existing business systems

- Lower deployment risk across legacy and modern environments

- Better governance, compliance, and operational control

- More scalable foundations for AI assistants, agents, and intelligent automation

- Practical enterprise AI adoption with measurable business value

Explore AI Integration: