Production-Grade AI/ML Model Engineering, LLMOps & Agent Orchestration

Custom AI, machine learning, and agentic systems engineered for production performance, scalability, and enterprise reliability.

Many organisations can build a promising model in a notebook, but struggle to move from experimentation to secure, scalable production systems. Trovix helps clients bridge that gap by combining AI engineering, machine learning operations (MLOps), LLMOps, model governance, GPU-accelerated infrastructure, and enterprise orchestration into a unified delivery approach.

We design, build, deploy, and manage modern AI systems using technologies such as Claude, DeepSeek, ChatGPT, Gemini, LLaMA, Mistral, Amazon Bedrock, Azure OpenAI, Google Vertex AI, NVIDIA AI infrastructure, Apache Iceberg, ClickHouse, Kafka, Spark, MLflow, Kubeflow, LangGraph, CrewAI, AutoGen, LlamaIndex, vector databases, RAG pipelines, MCP-compatible tool integrations, and API-first enterprise deployment patterns.

Our model engineering and orchestration solutions are designed for organisations that need AI systems to operate reliably across real business environments, including finance, healthcare, logistics, manufacturing, public sector, cybersecurity, and enterprise operations.

Trovix AI/ML Engineering Software Solutions

Trovix ModelForge© – AI/ML Model Engineering & Lifecycle Platform

Trovix ModelForge© is our production AI and machine learning engineering platform for building, training, validating, deploying, and monitoring models at enterprise scale.

What it does for clients:

- Builds and operationalises predictive ML models for classification, forecasting, anomaly detection, recommendation, optimisation, and risk scoring

- Supports the full model lifecycle from experimentation to production deployment

- Automates retraining, model validation, version control, and monitoring

- Enables secure deployment into APIs, internal systems, and business workflows

Technology stack:

- MLflow, Kubeflow, Airflow, and CI/CD-based MLOps pipelines

- PyTorch, TensorFlow, XGBoost, Scikit-learn, and GPU-enabled training

- NVIDIA acceleration for training and inference performance

- AWS SageMaker, Azure Machine Learning, and Google Vertex AI integration

Client benefits:

- Shorter time from prototype to production

- Lower technical debt and stronger reuse of AI components

- Improved model reliability, auditability, and operational performance

- Scalable foundations for future AI products and internal AI use cases

Trovix LLMStack© – Enterprise LLM Engineering & LLMOps Platform

Trovix LLMStack© helps clients build enterprise-grade generative AI applications using modern large language models, retrieval pipelines, evaluation frameworks, and secure deployment controls.

What it does for clients:

- Builds domain-specific AI assistants, copilots, and knowledge systems

- Supports LLM application development using Claude, DeepSeek, ChatGPT, Gemini, LLaMA, and Mistral-based architectures

- Implements retrieval-augmented generation (RAG), prompt orchestration, tool calling, and response grounding

- Provides evaluation, observability, and lifecycle management for enterprise LLM deployments

Technology stack:

- Amazon Bedrock, Azure OpenAI, Google Vertex AI, OpenAI-compatible APIs, Anthropic Claude, DeepSeek, and open-weight LLM integrations

- Vector search, embeddings, semantic retrieval, and document intelligence pipelines

- LlamaIndex, LangChain, and LLMOps evaluation workflows

- Enterprise controls for security, access, tracing, and response quality

Client benefits:

- Secure and scalable deployment of generative AI solutions

- Better knowledge access, summarisation, and natural language interaction

- Reduced hallucination risk through grounding and retrieval

- Reusable architecture for enterprise AI assistants and client-facing AI products

Trovix AgentFabric© – AI Agents, Multi-Agent Workflows & Orchestration

Trovix AgentFabric© is our orchestration layer for agentic AI systems that can reason over data, use tools, coordinate tasks, and automate operational workflows while keeping humans in control where needed.

What it does for clients:

- Deploys AI agents that investigate issues, summarise findings, recommend actions, and trigger workflows

- Supports multi-agent collaboration for research, operations, analytics, and decision support

- Integrates with enterprise tools, APIs, data platforms, and internal systems

- Combines autonomous reasoning with human-in-the-loop controls for sensitive workflows

Technology stack:

- LangGraph, CrewAI, AutoGen, Semantic Kernel, and LlamaIndex-based orchestration patterns

- MCP-compatible tool integrations for controlled access to enterprise systems

- Tool calling, workflow automation, and event-driven execution

- LLM integrations with Claude, DeepSeek, GPT, Gemini, and Bedrock-hosted models

Client benefits:

- Reduced manual workload across complex business processes

- Smarter investigation, coordination, and execution across systems

- Improved speed and consistency of operational decision-making

- Practical route from dashboards and copilots to intelligent autonomous workflows

Trovix FeatureFlow© – Scalable Feature Engineering & AI Data Pipelines

Trovix FeatureFlow© provides the data engineering layer needed to convert operational data into high-quality ML-ready features and reusable AI pipelines.

What it does for clients:

- Transforms raw enterprise data into clean, governed features for model training and inference

- Supports batch and real-time feature engineering at scale

- Combines structured, semi-structured, document, and event data into AI-ready data products

- Creates reusable pipelines that reduce duplication across teams and use cases

Technology stack:

- Apache Iceberg lakehouse architecture for scalable and open data foundations

- ClickHouse for real-time analytics, feature serving, and operational intelligence

- Kafka, Spark, and cloud-native processing pipelines

- AWS, Azure, and GCP storage and compute services

Client benefits:

- Higher-quality inputs for AI and ML models

- Better consistency between training and production environments

- Faster experimentation and deployment cycles

- Scalable data foundations for predictive analytics and agentic AI

Trovix DeployAI© – API-Driven AI Integration & Secure Production Deployment

Trovix DeployAI© enables production AI deployment into enterprise applications, operational systems, customer platforms, and regulated environments.

What it does for clients:

- Deploys models and AI services as secure APIs, microservices, or embedded enterprise components

- Integrates AI into ERP, CRM, EHR, customer service, command-and-control, analytics, and workflow systems

- Supports hybrid cloud, private cloud, and regulated deployment patterns

- Provides monitoring, versioning, rollback, and production control mechanisms

Technology stack:

- Kubernetes, container orchestration, and service-based deployment models

- API gateways, inference endpoints, event-driven integration, and secure authentication

- Cloud-native deployment on AWS, Azure, and Google Cloud

- NVIDIA-backed inference where high-performance AI workloads are required

Client benefits:

- Reliable AI deployment into existing enterprise environments

- Reduced integration friction and faster real-world value delivery

- Stronger security, observability, and governance across AI services

- Clear path from innovation to operational adoption

Key Capabilities

- Model lifecycle management: Automated pipelines for training, validation, deployment, monitoring, and continuous improvement

- Feature engineering at scale: Transform business, operational, document, and streaming data into ML-ready features using scalable compute and lakehouse architectures

- Continuous evaluation and drift detection: Monitor model quality, concept drift, prompt behaviour, and inference performance as data evolves

- LLMOps and agent orchestration: Manage prompts, retrieval, evaluation, tool integrations, and multi-agent execution across enterprise workflows

- API-driven deployment: Integrate AI into ERP, CRM, EHR, command-and-control, service platforms, and internal enterprise systems

- Governance and observability: Enable traceability, access control, monitoring, and human oversight for business-critical AI systems

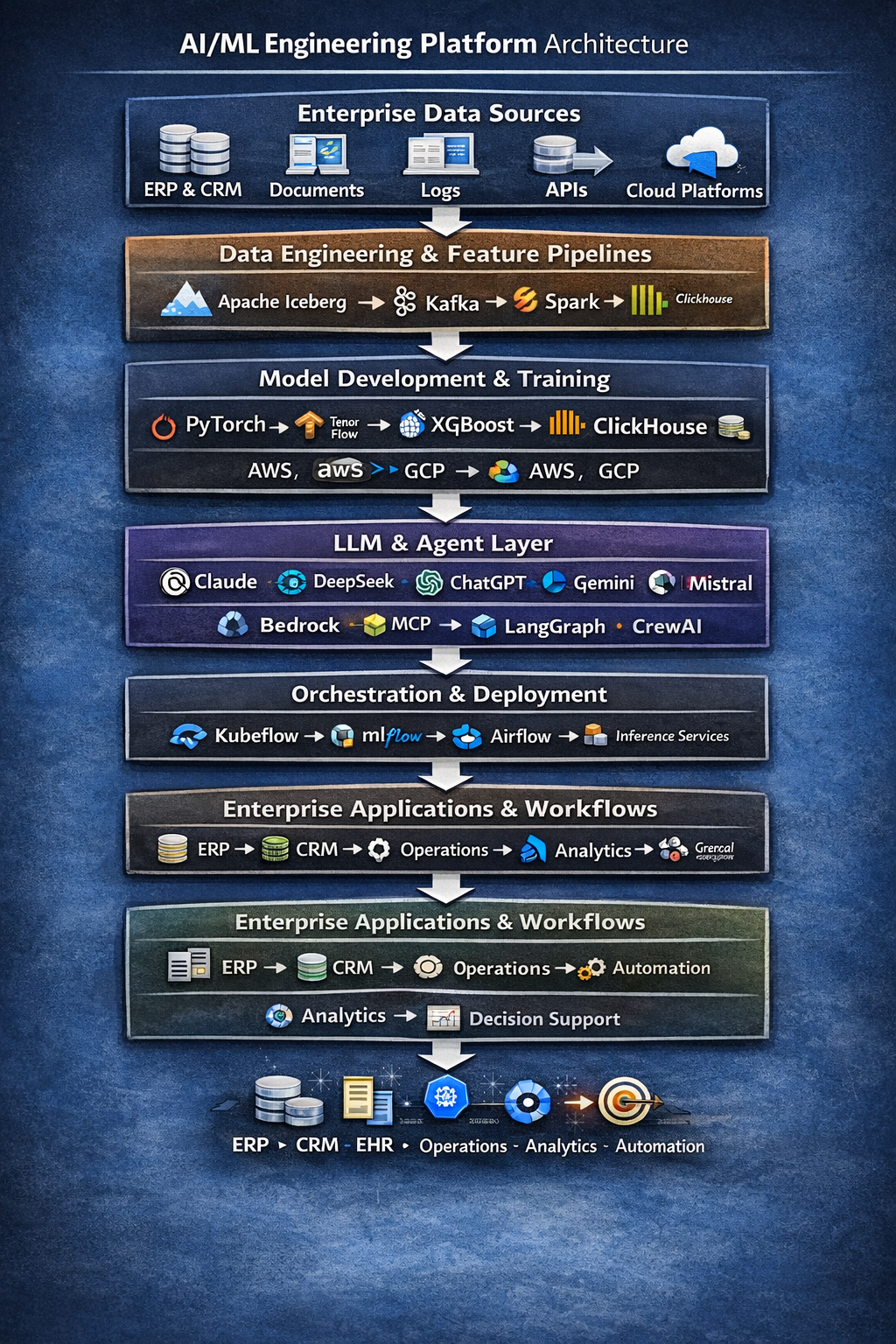

Architecture Overview

Trovix AI/ML engineering platforms are built as modular enterprise architectures that support both traditional machine learning workloads and advanced generative AI, agentic AI, and LLM-powered applications.

Enterprise Data Sources

ERP / CRM / EHR / Documents / Logs / APIs / Cloud Platforms / Streaming Events

↓

Data Engineering & Feature Pipelines

Apache Iceberg / Kafka / Spark / ClickHouse / Cloud Storage / Batch & Streaming Pipelines

↓

Model Development & Training

PyTorch / TensorFlow / XGBoost / SageMaker / Vertex AI / Azure Machine Learning / NVIDIA GPUs

↓

LLM & Agent Layer

Claude / DeepSeek / ChatGPT / Gemini / LLaMA / Mistral / Bedrock / RAG / MCP / LangGraph / CrewAI

↓

Orchestration & Deployment

Kubeflow / MLflow / Airflow / Kubernetes / APIs / Inference Services / Workflow Automation

↓

Enterprise Applications & Workflows

ERP / CRM / EHR / Operations / Analytics / Automation / Decision Support

Business Outcomes

Our approach focuses on modular, reusable, production-grade AI components that minimise technical debt and maximise time-to-value. Clients benefit from AI systems that are easier to deploy, easier to govern, and easier to scale.

- Faster movement from prototype to production

- Improved reliability of AI and ML systems

- Lower operational risk and stronger model governance

- Better integration with enterprise platforms and workflows

- More scalable foundations for AI products, copilots, and intelligent automation