LLMStack©

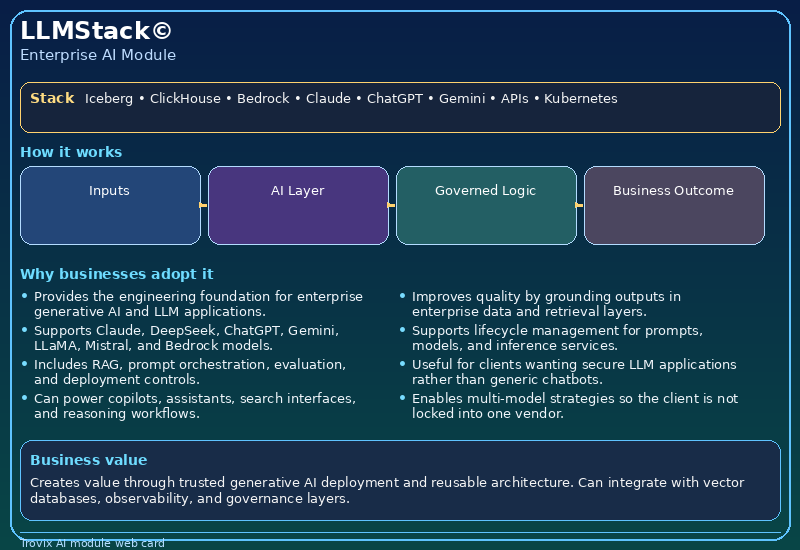

Provides the engineering foundation for enterprise generative AI and LLM applications.

LLMStack© is Trovix.ai’s enterprise platform for organisations that need to provides the engineering foundation for enterprise generative AI and LLM applications. while connecting that capability to a broader operating model around AI agents, LLM applications, predictive analytics, RAG, vector search, governed deployment, knowledge systems, workflow automation, and enterprise data platforms.

Many organisations can build a pilot, a dashboard, or a basic chatbot. Far fewer can turn that into a production-ready capability that integrates with documents, enterprise systems, APIs, operational telemetry, business workflows, approval controls, and scalable delivery patterns. That is where LLMStack© creates value.

LLMStack© is designed for organisations that want more than generic AI messaging. It is built for environments where AI must operate across documents, enterprise systems, APIs, workflows, decision processes, operational platforms, governed data layers, and secure runtime controls. It helps clients move from isolated experiments to a real enterprise AI architecture.

LLMStack© works especially well with ModelForge©, FeatureFlow©, ModelOps©, DeployAI©, ConnectAI©, HybridDeploy©, DeployOps©, GuardAI© to create a more connected ecosystem across analytics, agents, knowledge, deployment, governance, and operational execution.

What LLMStack© Does

LLMStack© supports a practical implementation model for enterprise AI rather than a disconnected proof of concept. It can be deployed as a focused capability, but it becomes much more powerful when connected to adjacent modules, shared data foundations, and governed cloud delivery. In practical terms, organisations use this module when they need AI to operate inside real business conditions rather than in a lab-style environment.

- Provides the engineering foundation for enterprise generative AI and LLM applications.

- Supports Claude, DeepSeek, ChatGPT, Gemini, LLaMA, Mistral, and Bedrock models.

- Includes RAG, prompt orchestration, evaluation, and deployment controls.

- Can power copilots, assistants, search interfaces, and reasoning workflows.

- Improves quality by grounding outputs in enterprise data and retrieval layers.

- Supports lifecycle management for prompts, models, and inference services.

LLMStack© is especially useful where the organisation needs structured delivery across business logic, retrieval, automation, APIs, approvals, analytics, and multi-step execution. It can support greenfield AI programmes, but it is equally relevant for enterprises modernising legacy environments with APIs, event-driven pipelines, workflow tools, document repositories, line-of-business systems, and managed cloud services.

Why Organisations Need LLMStack©

As enterprise AI use becomes more advanced, businesses need systems that can combine governed data access, workflow-aware execution, modern AI models, cloud-native delivery, and business control points. The challenge is not only choosing a model. It is making AI reliable, secure, observable, and useful across real business environments.

LLMStack© addresses that challenge by giving organisations a more structured way to connect AI capability to measurable delivery. Enables multi-model strategies so the client is not locked into one vendor. Creates value through trusted generative AI deployment and reusable architecture.

In many organisations, the first AI project is technically promising but commercially weak because it lacks integration, governance, explainability, workflow fit, or a scalable deployment model. Trovix.ai positions LLMStack© as a corrective to that pattern. It is designed to make AI operate as a business capability that can be monitored, extended, and governed across teams, not just demonstrated in isolation.

Core Capabilities

Structured AI Engineering

Build repeatable foundations for training, evaluation, deployment, monitoring, and lifecycle control across ML and LLM systems.

This capability is strengthened when LLMStack© is connected to adjacent Trovix.ai modules such as ModelForge©, FeatureFlow©, ModelOps©, DeployAI©. That gives the client a more coherent product architecture and makes the wider site feel like a genuine enterprise AI platform rather than a collection of disconnected pages.

Reusable Delivery Patterns

Standardise pipelines, model services, prompt workflows, evaluation, and inference delivery so teams avoid fragile one-off builds.

This capability is strengthened when LLMStack© is connected to adjacent Trovix.ai modules such as ModelForge©, FeatureFlow©, ModelOps©, DeployAI©. That gives the client a more coherent product architecture and makes the wider site feel like a genuine enterprise AI platform rather than a collection of disconnected pages.

Production Reliability

Support retraining, observability, versioning, rollback, and runtime quality across business-critical AI workloads.

This capability is strengthened when LLMStack© is connected to adjacent Trovix.ai modules such as ModelForge©, FeatureFlow©, ModelOps©, DeployAI©. That gives the client a more coherent product architecture and makes the wider site feel like a genuine enterprise AI platform rather than a collection of disconnected pages.

Enterprise Scale

Provide the discipline needed for cloud, hybrid, and governed AI rollout across large organisations and multiple business domains.

This capability is strengthened when LLMStack© is connected to adjacent Trovix.ai modules such as ModelForge©, FeatureFlow©, ModelOps©, DeployAI©. That gives the client a more coherent product architecture and makes the wider site feel like a genuine enterprise AI platform rather than a collection of disconnected pages.

Technologies Behind LLMStack©

LLMStack© can be implemented using modern enterprise AI, ML, data, cloud, and orchestration technologies such as MLflow, Kubeflow, Airflow, Ray, Ray Serve, vLLM, TensorRT-LLM, model registry, prompt evaluation, guardrails, feature stores, SageMaker Pipelines, Azure Machine Learning, Vertex AI Pipelines, Databricks, Snowflake, Apache Iceberg, ClickHouse, Triton Inference Server, CI/CD, experiment tracking, batch inference, scalable serving gateways.

Depending on the client architecture, Trovix.ai can also support model ecosystems and delivery patterns spanning Anthropic Claude Opus 4.7, Claude Opus 4.6, Claude Sonnet 4.5, Claude Haiku 4.5, Amazon Bedrock, Azure AI Foundry, Azure OpenAI, Vertex AI Model Garden, Vertex AI Agent Builder, Google Gemini, DeepSeek, open-source Llama and Mistral deployments, Cohere, NVIDIA NIM, Databricks Mosaic AI, Snowflake Cortex, Oracle OCI Generative AI, IBM watsonx, MCP servers, ADK, LangGraph, CrewAI, AutoGen, Semantic Kernel, LlamaIndex, Weaviate, Pinecone, Qdrant, Milvus, pgvector, Apache Iceberg, ClickHouse, Spark, Kafka, OpenTelemetry, MLflow, Kubeflow, Airflow, Ray Serve, and Triton Inference Server.

Where clients need secure enterprise deployment, SecureLLMOps©, GuardAI©, TrustFabric©, and GovernDeploy© can help ensure the runtime is governed, traceable, and aligned with enterprise policy requirements. Where runtime scale matters, DeployAI©, DeployOps©, HybridDeploy©, and ConnectAI© help shape deployment and service integration patterns across cloud, hybrid, or private environments.

These technologies are not included as buzzwords alone. They matter because different clients require different delivery models. Some need managed model services. Some need private inference. Some need regulated deployment with evidence trails. Some need lakehouse analytics, AI agents, and retrieval systems working together. Trovix.ai uses the technology stack around LLMStack© to support that practical diversity.

How LLMStack© Works in Practice

Operational and Business Integration

LLMStack© can be connected to ERP, CRM, EHR, service desks, ticketing tools, planning systems, knowledge bases, dashboards, workflow engines, APIs, and operational records so AI becomes part of real delivery rather than a disconnected interface. This is important where the user wants measurable performance change, not just a new screen.

Knowledge, Data, and Context

Where context matters, LLMStack© can operate with modules such as KnowledgeAI©, DomainQuery©, InsightEngine©, and InsightDash© to combine enterprise knowledge, data, telemetry, and analytical signals. That gives the module stronger grounding and makes outputs more useful for operators, analysts, managers, and workflow participants.

Workflow and Action Layers

Where business execution matters, LLMStack© can work with AgentBridge©, AgentFlow©, FlowPilot©, RouteMind©, DecideAI©, or TaskAssist© to support multi-step workflow progression, approvals, routing, and action orchestration. This allows AI to support real process movement, not just analysis.

Deployment, Governance, and Scale

Where production resilience matters, LLMStack© can be delivered alongside DeployAI©, DeployOps©, HybridDeploy©, ModelOps©, and ComplianceMesh© to support observability, rollback, security, hybrid deployment, and long-term scale-up. This makes the module viable for organisations that expect enterprise service quality, not just prototype-level output.

Commercial Value and Adoption

Clients do not adopt LLMStack© because they want another technology label on the website. They adopt it because they need a practical answer to a business problem. That problem may be slow investigation, weak forecasting, repetitive support work, low visibility, fragile deployment, disconnected knowledge, poor workflow coordination, or a lack of governance around AI use. LLMStack© is positioned to solve that problem in a way that is commercially credible.

A strong adoption pattern begins with one use case, one team, or one workflow. Trovix.ai then expands that capability outward using adjacent modules. A client who starts with LLMStack© may later adopt ModelForge©, FeatureFlow©, ModelOps©, DeployAI©, and ConnectAI© to create a broader AI operating model. This is how the Trovix.ai site begins to feel like a genuine product ecosystem rather than a set of individual service pages.

Because the site positions these modules together, each page should reinforce the idea that Trovix.ai can sell not just a consultancy engagement but an actual stack of repeatable enterprise AI capabilities. LLMStack© contributes to that story by giving the client a named module, a clear business purpose, visible linkages to related modules, and a credible technology narrative.

Business Benefits

- Supports lifecycle management for prompts, models, and inference services.

- Useful for clients wanting secure LLM applications rather than generic chatbots.

- Enables multi-model strategies so the client is not locked into one vendor.

- Can integrate with vector databases, observability, and governance layers.

- Creates value through trusted generative AI deployment and reusable architecture.

Where LLMStack© Fits in the Trovix.ai Platform

LLMStack© is part of a wider Trovix.ai ecosystem rather than a standalone page. It often works alongside:

- ModelForge©

- FeatureFlow©

- ModelOps©

- DeployAI©

- ConnectAI©

- HybridDeploy©

- DeployOps©

- GuardAI©

- TrustFabric©

- SecureLLMOps©

This cross-linking matters because it helps the site feel like a real enterprise product architecture. A client may begin with LLMStack©, then expand into adjacent capabilities covering forecasting, decision intelligence, internal assistants, multi-agent orchestration, knowledge retrieval, deployment, or governance.

That ecosystem story is commercially useful. It gives Trovix.ai more than one thing to sell. It also makes the site look more like a product-led AI company with modules that reinforce each other across analytics, data, agents, security, deployment, and workflow automation.

Who LLMStack© Is For

Enables multi-model strategies so the client is not locked into one vendor. LLMStack© is especially relevant for organisations operating in finance, healthcare, logistics, supply chain, manufacturing, operations centres, support environments, public sector delivery, regulated enterprise workflows, and knowledge-intensive business environments.

It is also useful for organisations that want to modernise AI adoption without rebuilding everything from scratch. By connecting into existing systems, cloud services, data platforms, security controls, and business processes, LLMStack© supports a more realistic path from pilot to operational value.

Why LLMStack© Matters

Without a structured module such as LLMStack©, enterprise AI often becomes too narrow, too fragile, or too dependent on one disconnected workflow or assistant. That limits reliability, scalability, governance, and business usefulness.

LLMStack© gives organisations a more structured way to deploy AI in real enterprise contexts while strengthening the wider Trovix.ai ecosystem across analytics, agents, governance, deployment, workflow intelligence, and knowledge systems.

That is ultimately why this page exists. It is not only to describe a feature. It is to present LLMStack© as a named capability that fits into a bigger Trovix.ai proposition: a serious enterprise AI company with practical modules, strong technology depth, and cross-linked solutions that clients can understand and buy.

Talk to Trovix.ai

If your organisation wants to explore LLMStack© as part of a broader architecture spanning Claude models, Bedrock, Azure AI Foundry, Vertex AI, LLMOps, MLOps, MCP-connected agents, lakehouse analytics, workflow intelligence, and enterprise-grade AI operations, Trovix.ai can help define the roadmap and implement the platform in a way that delivers measurable business value.

Contact: